Golang memory arenas [101 guide]

Go 1.20 introduced an experimental arena package that lets you allocate many objects from a contiguous region of memory and free them all at once — bypassing the garbage collector entirely. The package remains experimental and its future is uncertain, but arenas are a valuable concept for understanding Go memory management and writing high-performance code.

The arena package is experimental and on hold indefinitely. The Go team has made no guarantees about compatibility or its continued existence. It has not been promoted to a stable package and may be removed in a future release.

The problem: garbage collection overhead

Go's garbage collector automatically frees unreachable objects, which simplifies development and ensures memory safety. But this convenience has a cost:

- CPU overhead — large programs can spend a significant portion of CPU time tracing and collecting garbage, especially when allocation rates are high.

- Memory overhead — the runtime delays collection to batch more work, so heap usage is often 2-3x the live data set.

- Latency spikes — while Go's GC is concurrent, mark phases still cause brief stop-the-world pauses that can affect tail latencies.

For most applications these tradeoffs are acceptable. But when you're processing millions of short-lived objects — parsing protocol buffers, handling HTTP request batches, or building temporary data structures — the GC spends considerable effort tracking objects that could be freed in bulk.

How memory arenas work

A memory arena (also called a region or zone allocator) is a simple concept:

- Allocate a large block of contiguous memory upfront.

- Bump a pointer forward for each new allocation — no free lists, no size classes, no per-object bookkeeping.

- Free everything at once by discarding the entire block when you're done.

This makes individual allocations extremely fast (just a pointer increment) and eliminates GC pressure for all arena-allocated objects. The tradeoff is that you cannot free individual objects — it's all or nothing.

┌──────────────────────────────────────────┐

│ Arena Memory Block │

├──────┬──────┬──────┬─────────────────────┤

│ Obj1 │ Obj2 │ Obj3 │ (free space) │

└──────┴──────┴──────┴─────────────────────┘

↑

next alloc

On 64-bit systems, Go's implementation uses distinct virtual address ranges for each arena. When an arena is freed, the runtime unmaps the memory so that any subsequent access triggers a fault — making use-after-free bugs detectable rather than silently corrupt.

Using the arena package

The arena package is available in Go 1.20+ behind the GOEXPERIMENT=arenas build tag:

GOEXPERIMENT=arenas go run main.go

The API has three core functions — arena.NewArena(), arena.New[T](), and arena.MakeSlice[T]():

import "arena"

type T struct {

Foo string

Bar [16]byte

}

func processRequest(req *http.Request) {

// Create an arena at the start of the function.

mem := arena.NewArena()

// Free all arena-allocated memory when done.

defer mem.Free()

// Allocate individual objects from the arena.

for i := 0; i < 10; i++ {

obj := arena.New[T](mem)

obj.Foo = fmt.Sprintf("item-%d", i)

}

// Allocate a slice with the given length and capacity.

slice := arena.MakeSlice[T](mem, 100, 200)

// All objects are freed when mem.Free() is called — no GC involved.

}

Cloning objects to the heap

If you need an object to outlive the arena, use arena.Clone to get a shallow heap-allocated copy:

mem := arena.NewArena()

obj1 := arena.New[T](mem) // arena-allocated

obj1.Foo = "important"

obj2 := arena.Clone(obj1) // heap-allocated copy

fmt.Println(obj2 == obj1) // false

mem.Free()

// obj1 is now invalid, but obj2 can be safely used

fmt.Println(obj2.Foo) // "important"

Reflection support

You can also allocate arena objects dynamically with the reflect package:

var typ = reflect.TypeOf((*T)(nil)).Elem()

mem := arena.NewArena()

defer mem.Free()

value := reflect.ArenaNew(mem, typ)

obj := value.Interface().(*T)

obj.Foo = "reflected"

When to use memory arenas

Arenas work best when objects share a clear, bounded lifetime — you allocate many objects, use them briefly, and discard them together:

Request-scoped processing — HTTP handlers or RPC methods that decode a request, build intermediate structures, produce a response, and discard everything. This is the canonical arena use case.

Protocol buffer decoding — unmarshalling a large protobuf message creates a tree of objects that are processed and then discarded. Google reported this as one of the primary motivations for the proposal.

Batch data pipelines — parsing CSV rows, JSON documents, or log lines in bulk where each batch is independent and short-lived.

Tree/graph construction — algorithms that build temporary trees (e.g., AST parsing, binary trees, tries) and discard them after computing a result.

Detecting bugs with address sanitizer

Because arenas introduce manual lifetime management, use-after-free bugs become possible. Go provides built-in support for the address sanitizer (-asan) and memory sanitizer (-msan) to catch these errors during development and testing.

For example, this program accesses an arena-allocated object after it's been freed:

package main

import (

"arena"

)

type T struct {

Num int

}

func main() {

mem := arena.NewArena()

o := arena.New[T](mem)

mem.Free()

o.Num = 123 // incorrect: use after free

}

Running with -asan produces a clear error message pointing to the exact line:

go run -asan main.go

accessed data from freed user arena 0x40c0007ff7f8

fatal error: fault

[signal SIGSEGV: segmentation violation code=0x2 addr=0x40c0007ff7f8 pc=0x4603d9]

goroutine 1 [running]:

runtime.throw({0x471778?, 0x404699?})

/go/src/runtime/panic.go:1047 +0x5d fp=0x10c000067ef0 sp=0x10c000067ec0 pc=0x43193d

runtime.sigpanic()

/go/src/runtime/signal_unix.go:851 +0x28a fp=0x10c000067f50 sp=0x10c000067ef0 pc=0x445b8a

main.main()

/workspace/main.go:15 +0x79 fp=0x10c000067f80 sp=0x10c000067f50 pc=0x4603d9

runtime.main()

/go/src/runtime/proc.go:250 +0x207 fp=0x10c000067fe0 sp=0x10c000067f80 pc=0x434227

runtime.goexit()

/go/src/runtime/asm_amd64.s:1598 +0x1 fp=0x10c000067fe8 sp=0x10c000067fe0 pc=0x45c5a1

Limitations and gotchas

Slices cannot grow in-place

You can allocate slices with arena.MakeSlice, but append will move the slice to the heap if it needs to grow:

// Pre-allocate with sufficient capacity to avoid append-triggered escapes.

slice := arena.MakeSlice[string](mem, 0, 100)

slice = append(slice, "ok") // stays in arena (capacity available)

// But if capacity is exceeded:

small := arena.MakeSlice[string](mem, 0, 0)

small = append(small, "escaped") // silently moves to the heap

If you don't know the size upfront, consider a linked list which can grow by allocating individual nodes from the arena.

No map support

Arenas don't support maps. You can work around this with a user-defined generic map that accepts an arena for internal node allocations, or use a slice of key-value pairs for small collections.

No direct string allocation

Strings can't be allocated directly from an arena, but you can work around it with []byte and unsafe:

src := "source string"

mem := arena.NewArena()

defer mem.Free()

bs := arena.MakeSlice[byte](mem, len(src), len(src))

copy(bs, src)

str := unsafe.String(&bs[0], len(bs))

// str is only valid while mem is alive

Be very careful: arena-allocated strings become dangling pointers after Free(). Always use the address sanitizer during development.

Nil arenas are invalid

You can't pass nil as an arena to fall back to heap allocation:

obj := arena.New[Object](nil) // panics

Because arena.New is a generic package function (not a method), you can't define an Allocator interface. This means arena and non-arena code paths must be separate, which makes adoption more invasive.

No individual free

You cannot free individual objects — only the entire arena. If your workload has objects with significantly different lifetimes, you may need multiple arenas or a different approach entirely.

Performance

Real-world results at Google

By using memory arenas internally, Google reported up to 15% savings in both CPU and memory across several large production applications. The gains came primarily from reduced GC tracing time and lower heap memory usage.

Benchmark: Binary Trees

Allocation-heavy benchmarks show even more dramatic improvements. Using the Binary Trees benchmark from Benchmark Games, we can modify the allocation function to optionally use an arena:

+func allocTreeNode(a *arena.Arena) *Tree {

+ if a != nil {

+ return arena.New[Tree](a)

} else {

return &Tree{}

}

}

Without arenas:

/usr/bin/time go run arena_off.go

77.27user 1.28system 0:07.84elapsed 1001%CPU (0avgtext+0avgdata 532156maxresident)k

With arenas:

GOEXPERIMENT=arenas /usr/bin/time go run arena_on.go

35.25user 5.71system 0:05.09elapsed 803%CPU (0avgtext+0avgdata 385424maxresident)k

| Metric | Without arenas | With arenas | Change |

|---|---|---|---|

| User CPU (s) | 77.27 | 35.25 | -54% |

| Wall time | 7.84s | 5.09s | -35% |

| RSS memory | 532 MB | 385 MB | -28% |

The wall time improvement is 35%, but the more telling metric is the 54% reduction in user CPU time — most of that savings comes from eliminating GC work. System time increases (1.28s → 5.71s) because the arena implementation relies on mmap/munmap syscalls, but this is far outweighed by the GC savings.

Production alternatives

Because the arena package remains experimental, production Go applications typically use other techniques to reduce GC pressure:

sync.Pool — the standard library's sync.Pool reuses previously allocated objects across garbage collection cycles. Unlike arenas, sync.Pool is stable and widely used:

var bufPool = sync.Pool{

New: func() any {

return new(bytes.Buffer)

},

}

func process() {

buf := bufPool.Get().(*bytes.Buffer)

defer bufPool.Put(buf)

buf.Reset()

// use buf...

}

Pre-allocated slices — allocating slices with a known capacity upfront avoids repeated allocations during append:

results := make([]Result, 0, expectedCount)

GOGC and GOMEMLIMIT — tuning the garbage collector with GOGC (GC frequency) and GOMEMLIMIT (Go 1.19+, soft memory limit) can significantly reduce GC overhead without changing your code.

Memory arenas in Uptrace

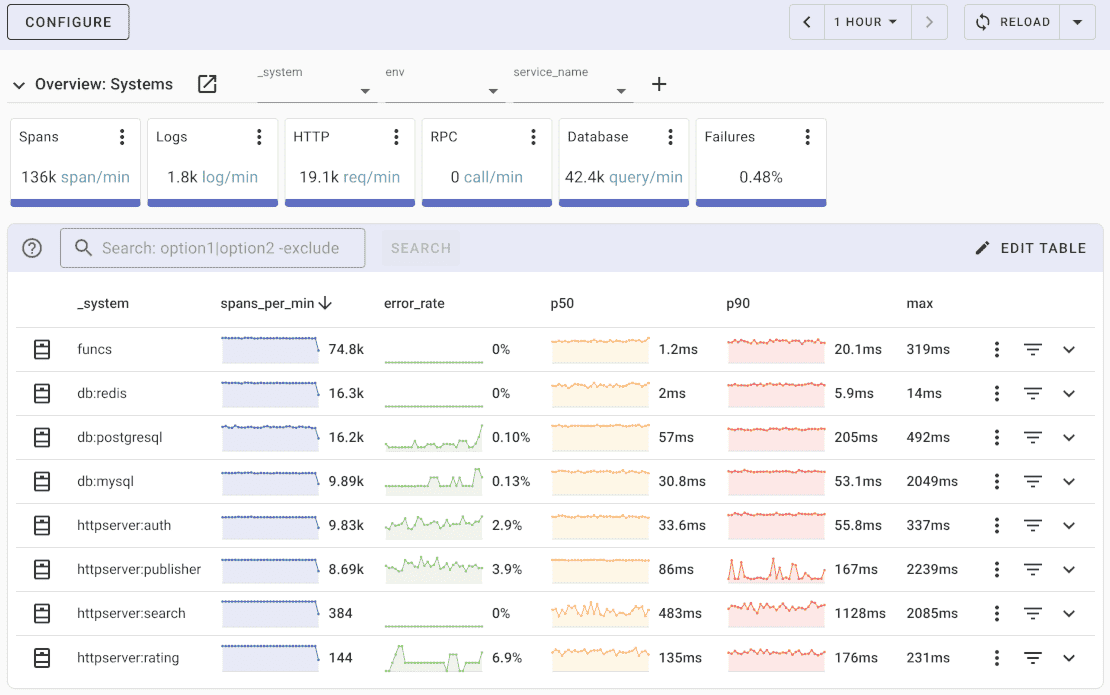

Uptrace is an open source APM tool written in Go. You can use it to monitor applications and set up alerts to receive notifications via email, Slack, Telegram, and more.

Uptrace receives data from OpenTelemetry in large batches (1k-10k items). In practice, Uptrace relies on sync.Pool and pre-allocated buffers to minimize allocations during span and metric ingestion. If the arena package ever becomes stable, it would be a natural fit for Protobuf decoding and batch processing, where thousands of objects are allocated, processed, and discarded together.

Conclusion

Memory arenas demonstrate that significant performance gains — up to 54% CPU reduction in allocation-heavy workloads — are possible by giving the programmer control over object lifetimes. The core idea is simple: when many objects share the same lifetime, allocate them together and free them together.

However, the experimental arena package is on hold indefinitely due to API design concerns, so you should rely on production-ready techniques like sync.Pool, pre-allocated slices, and GC tuning for now. If you want to experiment with arenas, use -asan during development and keep arena usage isolated behind clear abstraction boundaries so it can be easily removed.

Acknowledgements

This post is based on arena package proposal by Dan Scales and arena performance experiment by thepudds.

You may also be interested in: